Overview

Mastering data ingestion pipelines is crucial for IT managers. Why? Because it empowers organizations to efficiently collect and process information from diverse sources, significantly enhancing decision-making and operational performance. This article outlines key strategies, tools, and best practices for constructing effective pipelines. It emphasizes the necessity of automation, scalability, and real-time processing to adapt to the evolving demands of data management in a competitive landscape. By implementing these strategies, organizations can not only streamline their operations but also gain a competitive edge in today’s data-driven world.

Introduction

In today’s digital age, effectively managing and utilizing data is a transformative factor for organizations across all sectors. Data ingestion—the process of collecting and importing data from various sources into a target system—forms the backbone of this essential endeavor. It empowers businesses to extract valuable insights for strategic decision-making.

As the demand for real-time information intensifies, grasping the intricacies of data ingestion becomes crucial. Organizations must implement robust pipelines and integrate advanced tools to navigate this complex landscape, ensuring data quality and operational efficiency.

This article will explore the critical components of data ingestion, examining:

- Best practices

- Common challenges

- Emerging trends

that IT managers must understand to maintain a competitive edge in an increasingly data-driven world.

Understanding Data Ingestion: Definition and Importance

The systematic process of a data ingestion pipeline is crucial for organizations seeking to collect and import information from various sources into a designated target system for storage, processing, and analysis. This foundational step in information management is essential for organizations aiming to harness information effectively for informed decision-making and operational efficiency. In today’s competitive environment, timely access to information is not just beneficial; it is vital, as businesses increasingly depend on insights driven by information to guide their strategies.

Establishing robust data ingestion pipelines is imperative for IT managers, as these pipelines facilitate a seamless flow across various systems and applications. Efficient information intake not only enhances resource accessibility but also significantly influences business performance. For instance, organizations that adopt efficient information collection procedures can decrease the time required to obtain insights, thus accelerating decision-making and enhancing responsiveness to market changes.

Avato’s hybrid integration platform guarantees 24/7 uptime for critical integrations and provides real-time monitoring and alerts on system performance. This highlights the reliability of information ingestion processes, which is essential for sectors such as banking, healthcare, and government.

Recent trends indicate a growing emphasis on automation and the data ingestion pipeline for real-time information, which are becoming vital for IT managers as we approach 2025. As companies continue to evolve, the ability to absorb information quickly and dependably will be a crucial differentiator. Expert opinions underscore best practices such as leveraging cloud-based solutions and employing advanced processing frameworks like Apache Spark, which can optimize handling and minimize latency.

Gustavo Estrada’s insights on Avato’s capabilities emphasize the importance of simplifying intricate projects and achieving results within intended time frames and budget limitations, ultimately enhancing business value.

Real-world examples underscore the significance of efficient information processing pipelines. For example, entities employing Spark for image file management have demonstrated that understanding file formats and information structures is essential for effective processing. By adopting binary formats, these entities have achieved substantial advancements in processing larger image files, showcasing the direct correlation between information acquisition strategies and operational success.

Moreover, avoiding minor file issues through Spark’s repartition and coalesce functions is crucial for refining information management and ensuring effective workflows.

In conclusion, the data ingestion pipeline transcends a mere technical requirement; it serves as a strategic resource that empowers organizations to make informed choices and enhance their operational capabilities. As the landscape of information management continues to evolve, IT managers must prioritize the development of an effective data ingestion pipeline, leveraging Avato’s hybrid integration platform to maintain a competitive edge and maximize the value of legacy systems.

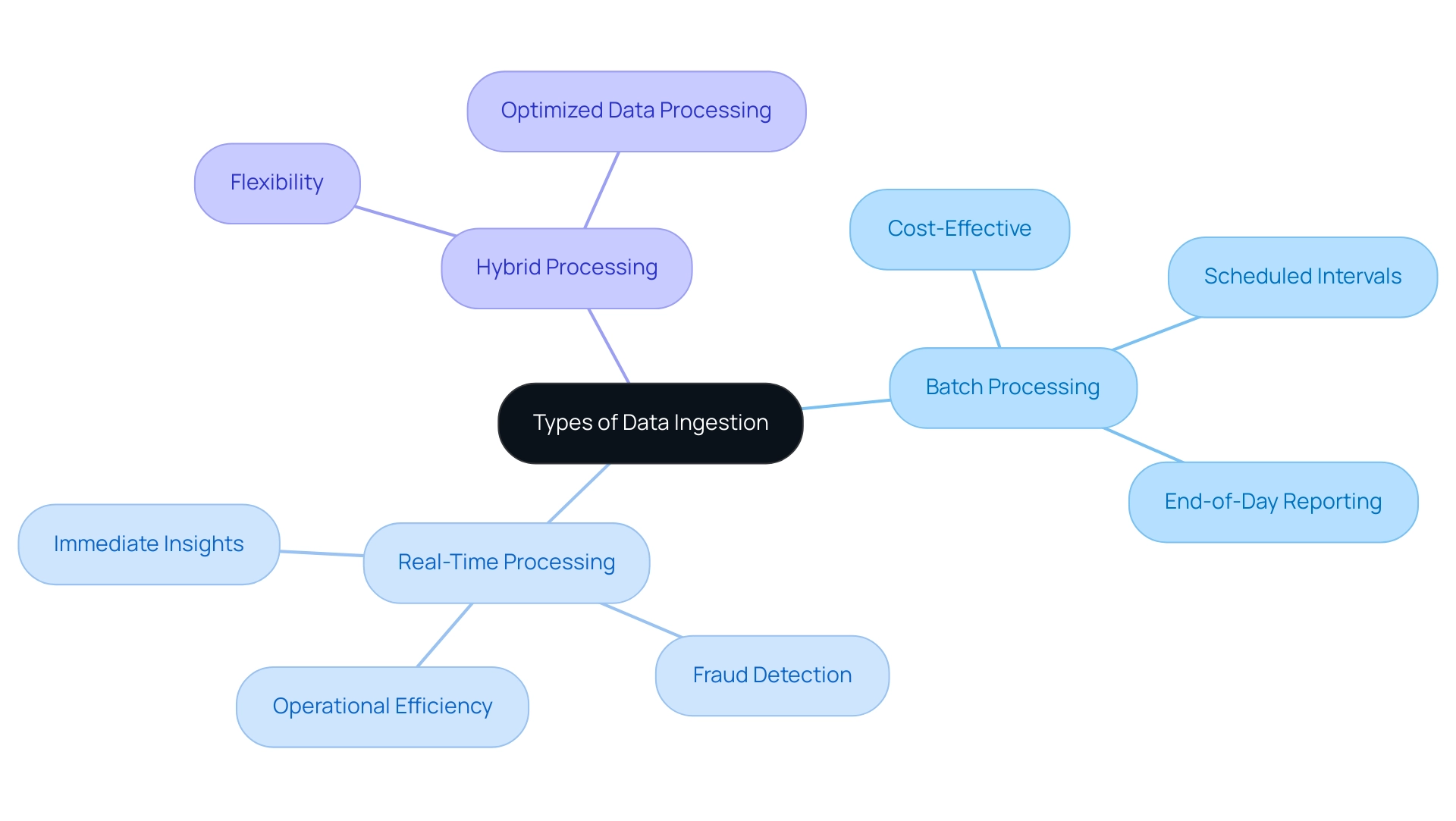

Types of Data Ingestion: Batch, Real-Time, and Hybrid Approaches

The data ingestion pipeline can be categorized into three primary types: batch, real-time, and hybrid approaches, each offering unique advantages tailored to specific business needs. This pipeline involves collecting and processing information in large groups at scheduled intervals, making it especially effective in scenarios where real-time information is not critical, such as end-of-day reporting.

Batch processing stands out as a cost-effective solution, minimizing the need for constant system monitoring. This makes it an appealing choice for entities aiming to streamline operations while managing costs. The data ingestion pipeline facilitates continuous information flow, enabling immediate processing and analysis, which is essential for applications requiring instant insights, such as fraud detection in banking.

The benefits of real-time information processing are substantial; it empowers companies to react swiftly to emerging challenges, thereby enhancing operational efficiency and decision-making abilities. A recent case study underscores the growing importance of real-time information observability and monitoring, revealing that entities adopting these practices can significantly decrease detection and resolution times for quality issues, benefiting both real-time and batch processing scenarios. Hybrid processing, by combining both batch and real-time methods, offers unparalleled flexibility.

This approach allows organizations to optimize their data processing based on specific use cases, ensuring adaptability to varying data volumes and resource availability. Current trends indicate a rising inclination for hybrid strategies, as they enable enterprises to leverage the strengths of both types while mitigating their weaknesses. Avato’s hybrid integration platform exemplifies this trend, having successfully transformed financial institutions by facilitating seamless integration with minimal downtime, as evidenced in its collaboration with Coast Capital, where the transition involved a mere 63-second outage.

Timely quality checks are essential in any data ingestion pipeline strategy. If information checks occur post-consumption, it can lead to delays and reliability issues, underscoring the significance of integrating adaptive quality algorithms that keep pace with changing environments. Avato guarantees information quality during the data ingestion pipeline processes, as emphasized by Gustavo Estrada from BC Provincial Health Services Authority, who noted that Avato streamlines intricate projects and delivers outcomes within expected timelines and financial constraints, demonstrating the efficiency of robust data ingestion pipeline strategies.

As entities navigate the complexities of their data ingestion pipeline, they must consider expert perspectives and statistics that reveal the effectiveness of each approach. For instance, the choice between real-time and batch information intake should be guided by specific business needs, information volume, and resource availability. By understanding the distinctions between these methods and assessing their operational requirements, IT managers can develop strong hybrid information acquisition strategies that enhance their organizations’ capabilities and competitive advantage, ultimately resulting in cost savings, quicker product delivery, and improved customer satisfaction.

Designing Data Ingestion Pipeline Architecture: Key Considerations

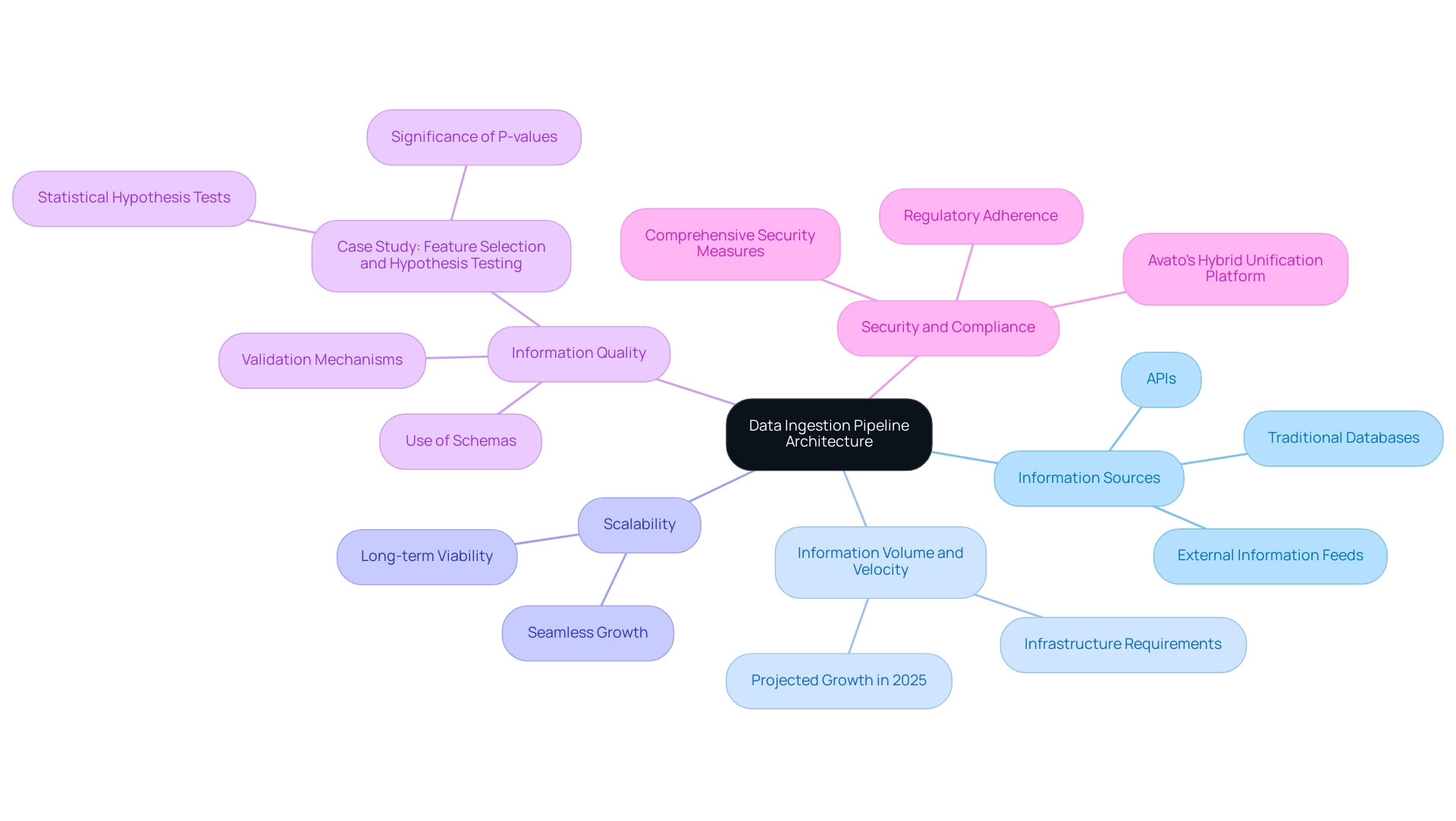

When designing an information ingestion pipeline architecture, IT managers must prioritize several critical factors to ensure both efficiency and effectiveness.

-

Information Sources: Begin by identifying the various information sources that will contribute to the pipeline. This includes not only traditional databases but also APIs and external information feeds, which can significantly enhance the richness of the content being ingested.

-

Information Volume and Velocity: It is essential to assess the expected volume and speed of incoming information. With global information volumes projected to grow exponentially in 2025, understanding these metrics is crucial for determining the necessary infrastructure and processing capabilities to handle the influx without bottlenecks.

-

Scalability: Design the architecture with scalability in mind. As information complexity and volume increase, the ability to scale seamlessly is vital. A robust architecture should accommodate future growth without necessitating a complete overhaul, thus ensuring long-term viability.

-

Information Quality: Implement rigorous mechanisms for validation and cleansing. High-quality information is paramount throughout the ingestion process, as it directly impacts the reliability of analytics and machine learning models. By ensuring that only significant information is utilized, organizations can enhance the overall quality of their insights. For example, a case study on feature selection and hypothesis testing demonstrates how utilizing statistical hypothesis tests can ascertain the relevance of features, ensuring that only significant information is used in model training. Additionally, employing schemas can significantly reduce costs associated with errors, as catching issues early in the entry process is far less expensive than addressing them post-decision-making. This aligns with Avato’s user manual guidance, which emphasizes the importance of schemas in preventing costly errors.

-

Security and Compliance: Integrate comprehensive security measures to protect sensitive information. Adherence to pertinent regulations is essential, particularly in industries such as banking and healthcare, where breaches can have severe repercussions. Avato’s hybrid unification platform is crafted to fulfill these compliance requirements while ensuring robust security evaluations are established, showcasing the firm’s commitment to designing technology solutions that simplify intricate combination processes.

By addressing these essential factors, IT managers can create a resilient and efficient data ingestion pipeline that not only meets present needs but is also prepared for future challenges. Successful examples in healthcare illustrate how well-structured collection pipelines can result in enhanced information accessibility and operational efficiency, ultimately fostering better decision-making and innovation. Moreover, Avato’s dedication to ensuring 24/7 uptime for essential integrations underscores the significance of reliability in information processing pipelines.

As Gustavo Estrada observed, Avato streamlines intricate projects and delivers outcomes within expected time limits and financial constraints, emphasizing the efficiency of a well-organized information intake approach that enables businesses to safeguard their operations for the future.

Step-by-Step Guide to Building a Data Ingestion Pipeline

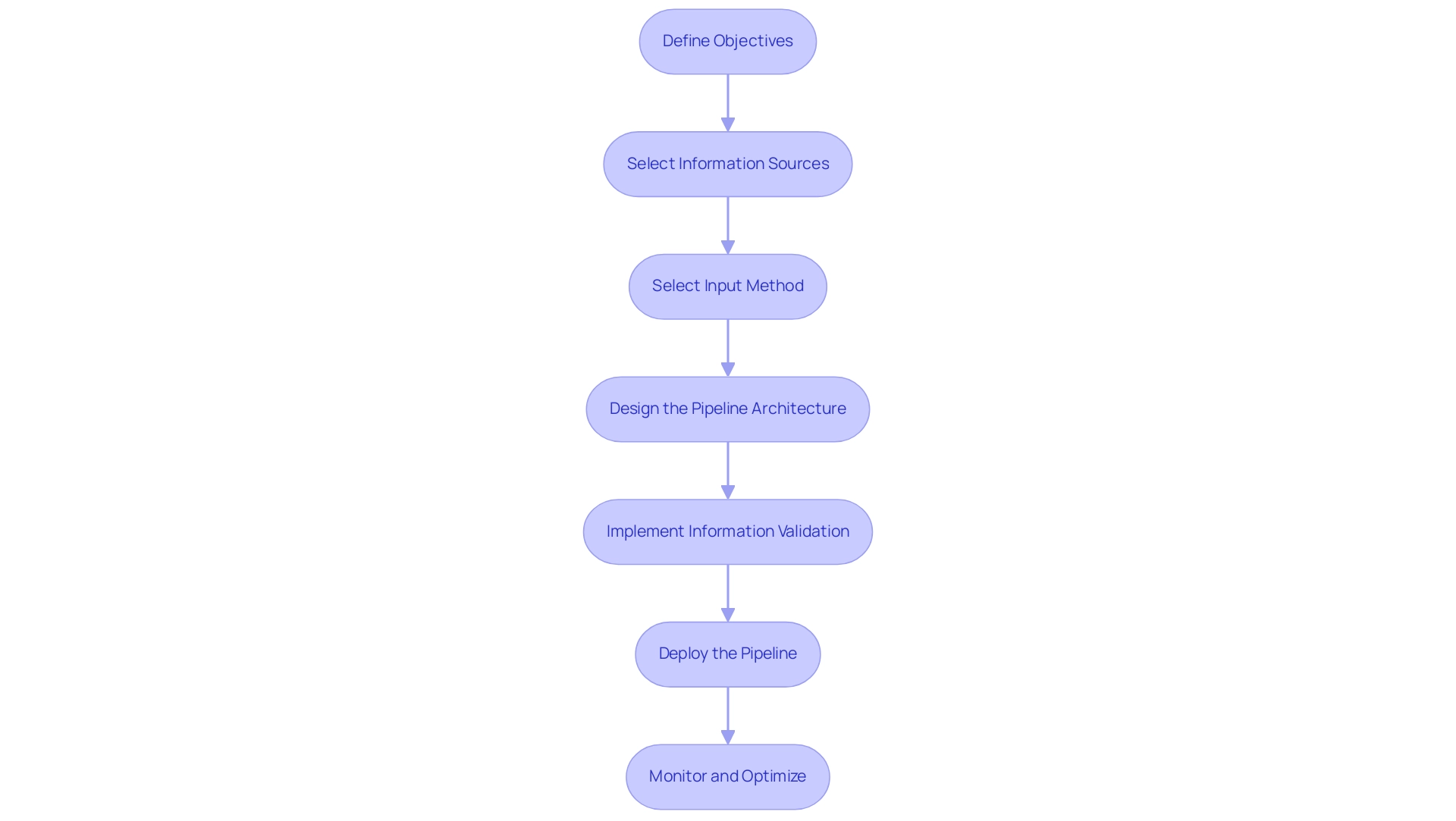

Building a data ingestion pipeline is a critical process that involves several essential steps to ensure successful implementation and optimal performance.

Define Objectives: Begin by clearly outlining the goals of the information ingestion project. What are you aiming to achieve? This includes specifying the types of information to be ingested and the intended use cases, which will guide the entire process.

Select Information Sources: Identify and prioritize the sources that will be integrated into the pipeline. This step is crucial as it determines the quality and relevance of the information that will feed into your systems. Consider: which sources will provide the most valuable insights?

Select Input Method: Determine the suitable input approach—whether batch, real-time, or hybrid—according to the established goals and the features of the information. For example, real-time acquisition may be essential for applications needing instant information processing, while batch collection could be adequate for less time-critical content. What method aligns best with your needs?

Design the Pipeline Architecture: Create a comprehensive blueprint of the pipeline architecture. This should encompass all essential components for information collection, processing, and storage, ensuring that the design accommodates scalability and flexibility. How will you structure your pipeline for future growth?

Implement Information Validation: Establish robust validation rules to ensure quality and integrity throughout the ingestion process. Effective information validation is vital for maintaining high quality, which is essential for accurate analysis and decision-making. As highlighted in the case study titled ‘Planning Data Transformations,’ outlining necessary transformations is critical for preparing information for analysis, including cleaning, standardizing, and enriching. The use of schemas in this step is particularly important, as it helps catch errors early in the process, ultimately saving costs associated with later-stage corrections. For instance, organizations can save up to 30% in correction expenses by implementing schemas effectively.

Deploy the Pipeline: Implement the pipeline using the selected tools and technologies. Ensure that it is properly configured for optimal performance, considering factors such as volume and processing speed. Avato guarantees 24/7 uptime for critical connections, which is vital for sustaining operational continuity in fields such as banking, healthcare, and government.

Monitor and Optimize: Continuously monitor the pipeline’s performance, utilizing real-time monitoring tools to track system performance and identify any bottlenecks. Regular optimization adjustments will enhance efficiency and reliability, ensuring that the pipeline meets evolving business needs. Utilizing streaming technologies such as Apache Flink or Apache Beam can further improve real-time information processing capabilities, making the pipeline more robust and responsive to business demands. Avato’s Hybrid Integration Platform supports this continuous monitoring, enabling entities to maximize integration performance and quickly address any issues that arise.

By adhering to these steps, entities can greatly enhance their data ingestion pipeline procedures, resulting in increased success rates in information intake initiatives. As highlighted by Gustavo Estrada, Avato streamlines intricate projects and provides outcomes within expected timelines and financial limits, enhancing the efficiency of Avato’s solutions in information acquisition initiatives. Furthermore, the platform optimizes the potential of legacy systems, lowers expenses, and improves operational capabilities, making it a valuable resource for entities aiming to modernize their integration efforts.

Common Challenges in Data Ingestion and How to Overcome Them

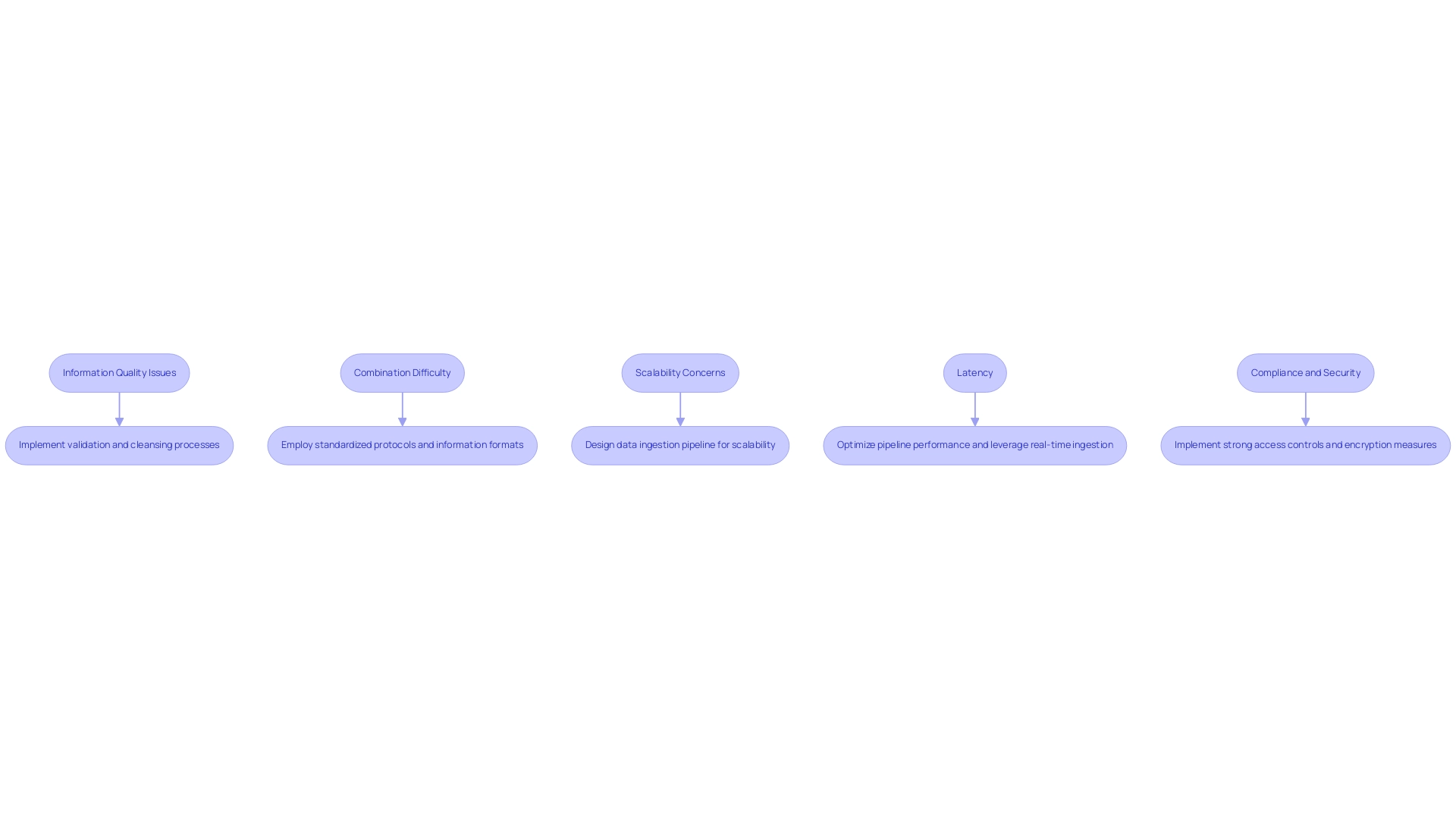

Data ingestion presents several significant challenges that organizations must navigate to ensure effective data management and utilization.

-

Information Quality Issues: Inconsistent or flawed information can severely impact decision-making processes. To combat this, it is crucial to implement robust validation and cleansing processes. Statistics suggest that entities often encounter significant quality challenges, with up to 30% of information being flawed, resulting in misguided strategies and wasted resources. The lack of a Business Intelligence (BI) tool can exacerbate these issues, forcing employees to rely on manual spreadsheet applications, which further increases the risk of errors. Avato’s hybrid unification platform tackles these challenges by offering a robust basis for quality management, ensuring that organizations can rely on their information for critical decisions.

-

Combination Difficulty: The merging of information from various sources can be a challenging endeavor. Employing standardized protocols and information formats can greatly simplify this process, allowing for smoother information flows and decreasing the time spent on assimilation tasks. For example, organizations that have embraced API information ingestion techniques, which employ REST APIs for bulk and streaming interactions, have reported improved accessibility and reliability, facilitating quicker and more scalable information assimilation. As Gustavo Estrada from BC Provincial Health Services Authority noted, “Avato has the ability to simplify complex projects and deliver results within desired time frames and budget constraints,” highlighting the importance of effective integration solutions that Avato provides.

-

Scalability Concerns: As information volumes continue to increase exponentially, pipelines may struggle to keep pace. Designing the data ingestion pipeline for scalability from the outset is essential to mitigate this issue. Organizations that proactively address scalability in their data ingestion pipeline can better manage increased loads without compromising performance. Avato’s platform is architected to support 12 levels of interface maturity, allowing businesses to balance speed and sophistication in their integration efforts.

-

Latency: Delays in information processing can hinder real-time decision-making capabilities. Optimizing pipeline performance and leveraging real-time ingestion methods are effective strategies to address this challenge. For instance, the San Francisco Giants enhanced their time-to-insights by 50% after implementing a modern information system, showcasing the benefits of reducing latency in processing. Avato’s solutions are designed to accelerate secure system integration, ensuring that organizations can act on insights without delay.

-

Compliance and Security: Ensuring adherence to regulations while maintaining information security is critical, especially in sectors like banking and healthcare. Implementing strong access controls and encryption measures can help protect sensitive information from breaches and ensure adherence to regulatory standards. Organizations must prioritize these aspects to build trust and maintain operational integrity. Avato’s platform is trusted by banks, healthcare, and government entities, providing a rock-solid foundation for digital transformation initiatives.

By understanding these common challenges and implementing targeted solutions, organizations can enhance their information gathering processes, leading to improved quality and more informed decision-making, all while leveraging Avato’s pioneering hybrid integration solutions.

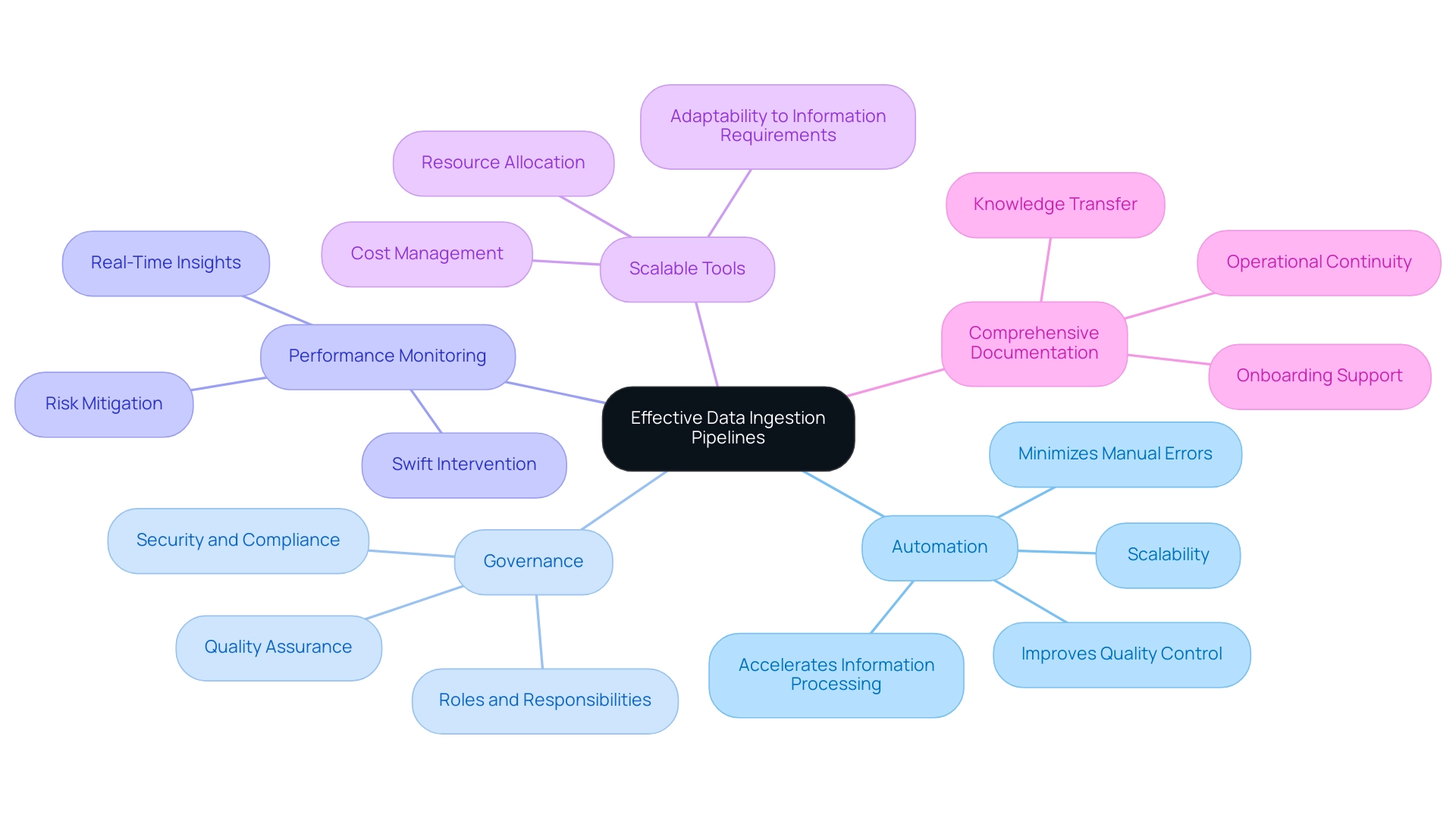

Best Practices for Effective Data Ingestion Pipelines

To optimize data ingestion pipelines effectively, IT managers must adhere to several best practices that enhance efficiency and reliability.

-

Embrace automation to minimize manual errors and accelerate information processing. Automated information ingestion not only streamlines workflows but also significantly reduces processing time. As Gustavo Estrada from BC Provincial Health Services Authority highlights, “Avato has simplified complex projects and delivered results within desired time frames and budget constraints.” This transition leads to improved quality control and scalability, effectively addressing the challenges associated with manual processes.

-

Establish governance. A robust governance framework is critical for ensuring quality, security, and compliance. This framework should clearly outline roles, responsibilities, and processes governing information management, thereby enhancing the integrity and reliability of the information being ingested. The complexities of managing various pipeline architectures for streaming and batch processing necessitate a strong governance framework to mitigate risks.

-

Monitor performance continuously. Implementing robust analytics capabilities allows for real-time insights into the performance of the hybrid system, enabling swift intervention in case of anomalies or failures. This proactive approach helps mitigate risks associated with information loss or corruption, ensuring that ingestion processes remain efficient and reliable.

-

Use scalable tools. Selecting tools and technologies that can adapt to changing information requirements is imperative. As organizations generate larger volumes of information, the costs associated with storage systems and servers increase. The correlation between greater information volumes and rising expenses makes scalable solutions essential for adjustment without incurring excessive costs. The need for various pipeline architectures adds complexity and requires additional resources for effective management.

-

Document processes comprehensively. Thorough documentation of intake processes, configurations, and modifications is essential for effective knowledge transfer and troubleshooting. This practice not only aids in maintaining operational continuity but also supports onboarding new team members by providing them with a clear understanding of existing workflows.

By implementing these best practices, IT managers can significantly enhance the efficiency and effectiveness of their data ingestion pipelines. The execution of automated information intake, as emphasized in the case study ‘Automated Information Intake Benefits,’ leads to quicker information processing, enhanced quality control, and scalability of intake efforts across enterprises, ultimately resulting in improved information management and utilization.

Essential Tools and Technologies for Data Ingestion

An efficient data ingestion pipeline is essential for companies aiming to leverage the potential of their information. Several tools and technologies stand out in this domain, each offering unique capabilities tailored to various needs:

- Apache Kafka: Renowned for its distributed streaming architecture, Apache Kafka excels in real-time information ingestion and processing. Its capability to manage high-throughput information streams makes it a favored option for entities needing prompt insights from their information.

- Apache NiFi: This powerful tool automates information flows between systems, featuring a user-friendly interface that simplifies the design of complex pipelines. NiFi’s adaptability enables straightforward combination of various information sources, making it perfect for entities with diverse information collection requirements.

- Talend: As an open-source platform for combining information, Talend offers strong capabilities for both batch and real-time information collection. Its extensive library of connectors facilitates seamless integration with numerous information sources, enabling organizations to streamline their workflows effectively.

- Fivetran: This cloud-based solution automates the collection of information from various sources into storage systems, significantly reducing the time and effort required for preparation. Fivetran’s automated connectors ensure that information is always up-to-date, allowing businesses to focus on analysis rather than management.

- AWS Glue: A fully managed ETL service, AWS Glue streamlines preparation for analytics by supporting both batch and real-time ingestion. Its serverless design enables companies to expand their processing requirements without the burden of overseeing infrastructure.

- Matillion: Recognized for its collection of pre-built connectors for different sources, Matillion improves the unification process, simplifying it for entities to link diverse systems.

- Dagster: This tool improves quality by modeling dependencies and providing a Python API for testing, which is essential for ensuring reliable information intake processes.

When choosing the appropriate tools, entities must consider particular requirements such as information volume, velocity, and the complexity of integration. The selection of tools can significantly affect the efficiency and effectiveness of the data ingestion pipeline, ultimately influencing the entity’s ability to utilize information for strategic decision-making.

In 2025, the market share of information processing technologies continues to evolve, with Apache Kafka and Talend emerging as leaders due to their strong capabilities and extensive adoption. Case studies emphasize organizations effectively employing Apache Kafka for real-time information processing, demonstrating its efficiency in dynamic settings. Furthermore, Fluentd acts as a robust illustration of an open-source tool created for gathering, parsing, and forwarding logs and other information from various sources, showcasing its multi-source collection abilities and integrated reliability features.

As the landscape of information collection tools expands, staying informed about the latest technologies and trends is essential for IT managers aiming to optimize their strategies. Avato distinguishes itself in this competitive landscape by providing unmatched value through speed, security, and ease of connection. With a deep dedication to architecting the technology foundation required to power rich, connected customer experiences, Avato significantly reduces costs while enhancing operational capabilities.

Avato’s hybrid integration platform offers sophisticated information mapping, real-time processing, and seamless connectivity to various sources, ensuring that enterprises can efficiently manage their data ingestion pipeline. As highlighted by Gustavo Estrada, a pleased client, “Avato simplifies complex projects and delivers results within desired time frames and budget limitations,” establishing it as a valuable partner for organizations managing their information acquisition challenges.

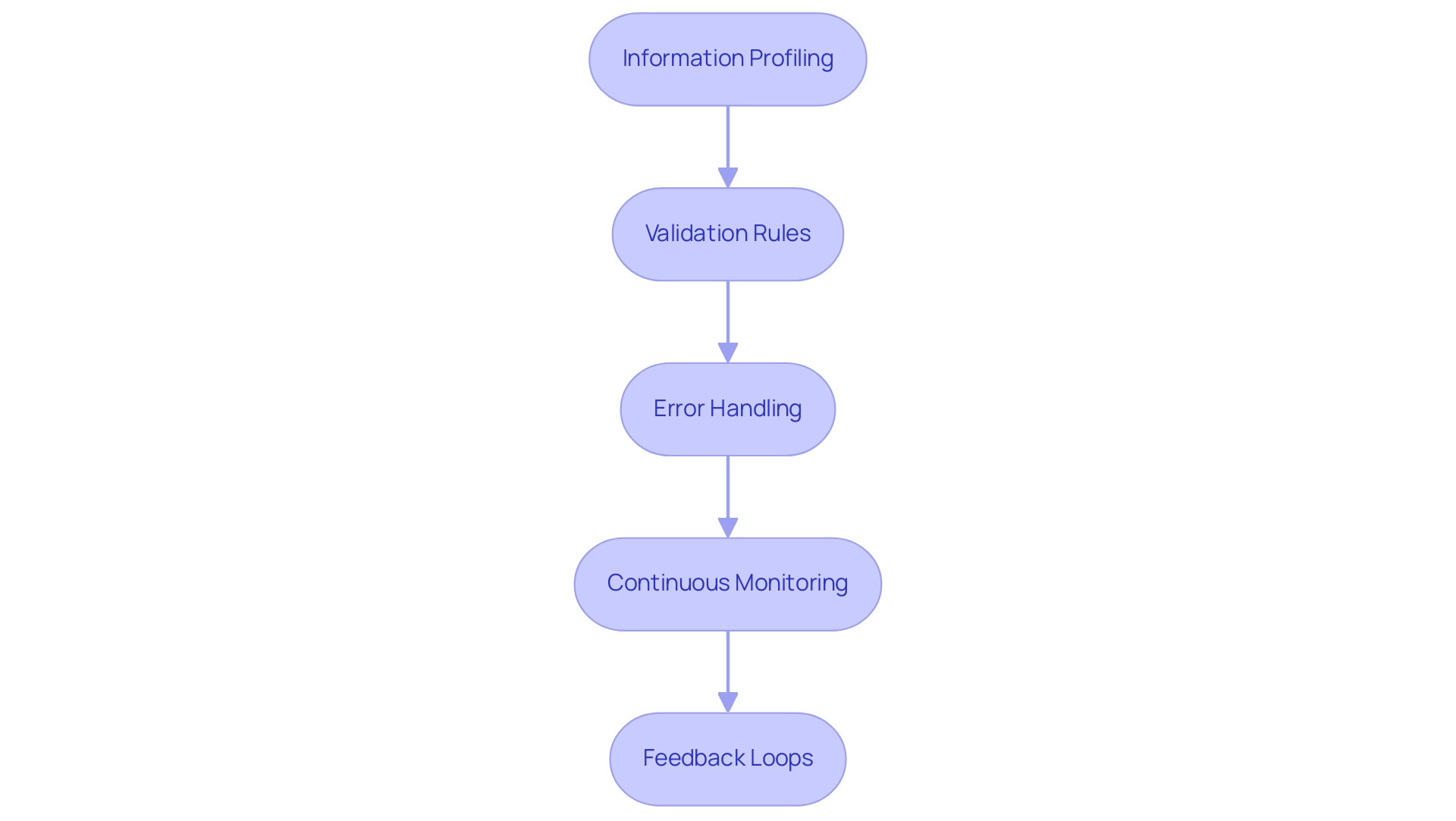

Ensuring Data Quality and Validation in Ingestion Pipelines

Information quality and validation are critical components of an efficient data ingestion pipeline. IT managers must prioritize essential practices to ensure the integrity and reliability of ingested data:

- Information Profiling: Conduct a thorough analysis of incoming information to assess its structure, quality, and consistency prior to ingestion. This crucial step identifies potential issues early in the process, allowing for timely intervention.

- Validation Rules: Develop and implement robust validation rules that check for accuracy, completeness, and adherence to predefined standards. These rules act as safeguards, preventing incorrect information from entering the data ingestion pipeline.

- Error Handling: Establish comprehensive error handling mechanisms that facilitate the graceful management of issues. This includes logging errors, notifying relevant stakeholders, and providing clear pathways for resolution.

- Continuous Monitoring: Engage in regular monitoring of information quality throughout the data ingestion pipeline process. This proactive approach allows for the identification and correction of issues in real-time, minimizing the risk of quality degradation. Avato’s hybrid integration platform offers robust analytics capabilities that continuously monitor performance, enabling organizations to optimize operations and enhance customer experiences. To implement continuous monitoring effectively, refer to the user manuals provided by Avato, which outline specific methodologies and tools for tracking quality metrics.

- Feedback Loops: Implement feedback loops that facilitate the continuous enhancement of information quality. By utilizing insights obtained from information usage and analysis, companies can refine their data ingestion pipeline methods and improve overall information integrity.

Integrating these practices not only enhances information quality but also ensures that organizations can manage their information more effectively, ultimately leading to improved decision-making and operational efficiency. As organizations increasingly adopt AI and machine learning, maintaining high information quality will be crucial for achieving superior results and fostering innovation. Notably, Avato guarantees round-the-clock availability for essential connections, enhancing the reliability of information processing procedures.

Founded on the principle of simplifying complex integration challenges, Avato stands out in the industry. Gustavo Estrada from BC Provincial Health Services Authority noted that Avato excels in simplifying complex projects and delivering results within desired time frames and budget constraints. Furthermore, the case study “Data Acquisition Challenges and Solutions” underscores the importance of implementing a robust information collection strategy that includes observability, significantly reducing time spent on cleansing and enhancing overall quality. Businesses that embrace AI and machine learning can achieve improved outcomes in quality through technologies that learn and enhance over time, making it vital to adapt to these evolving practices.

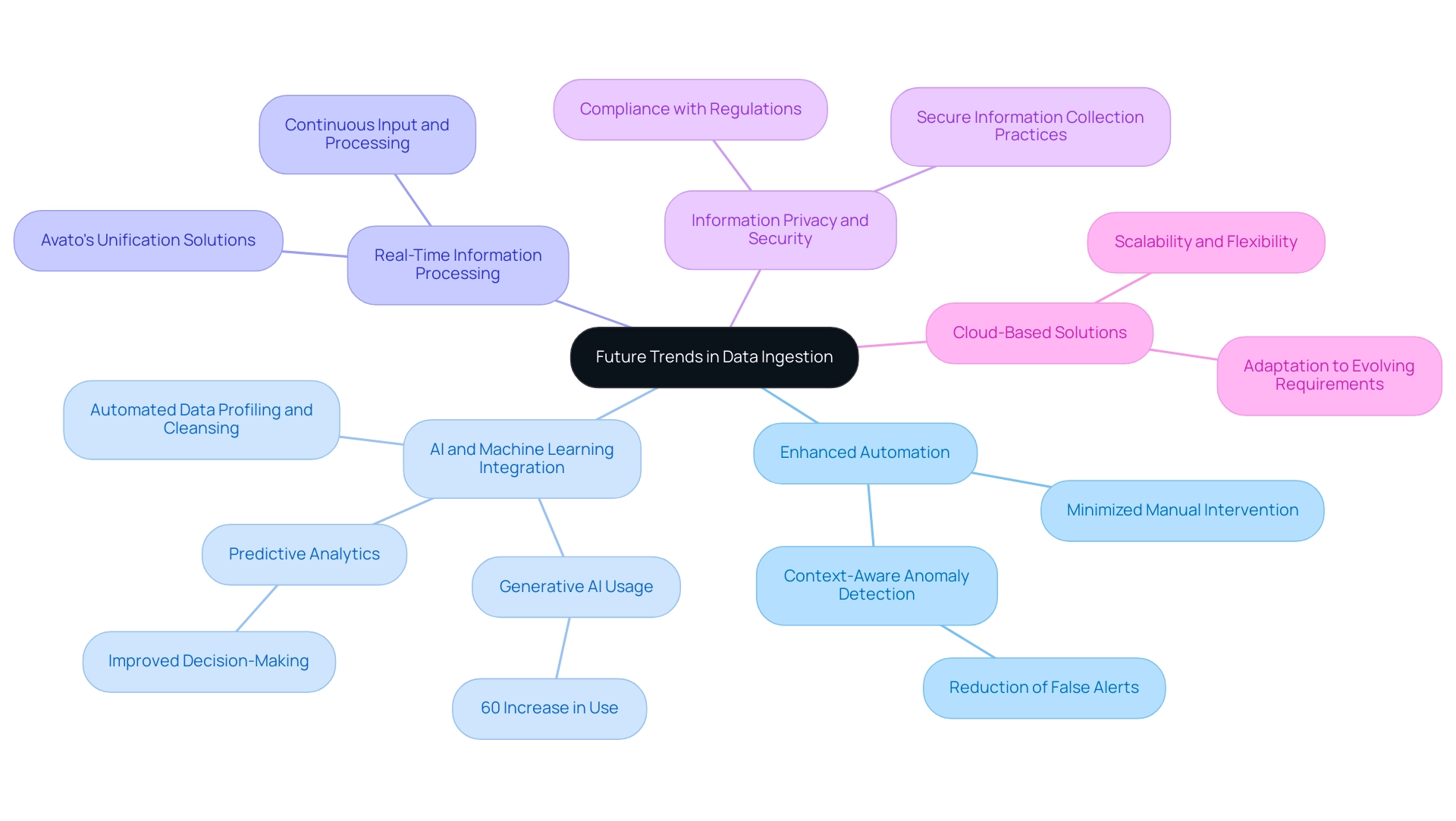

Future Trends in Data Ingestion: What IT Managers Need to Know

As technology continues to evolve, several key trends are shaping the future of data ingestion, particularly for IT managers in the banking sector:

- Enhanced Automation: Automation is set to revolutionize information intake processes by minimizing manual intervention and reducing the likelihood of errors. Future platforms will deploy refined, context-aware anomaly detection methods to mitigate excessive false alerts. This transformation not only streamlines operations but also enhances overall efficiency within the data ingestion pipeline, enabling entities to focus on strategic initiatives.

- AI and Machine Learning Integration: The integration of AI and machine learning into information ingestion processes is redefining how organizations manage quality. Generative AI, in particular, has emerged as a powerful tool, with a 60% increase in its use for enhancing customer experience through sophisticated chatbots and virtual assistants. These technologies facilitate predictive analytics, allowing businesses to anticipate their needs and improve decision-making. For instance, advanced tools are now being integrated into the data ingestion pipeline to automate profiling, validation, and cleansing of information as it is ingested, significantly boosting reliability and operational efficiency. This evolution underscores the importance of information quality and trust in contemporary data lakes, as entities transition from ungoverned repositories to systems that prioritize these factors.

- Real-Time Information Processing: The growing demand for instant insights is driving the adoption of technologies that enable continuous input and processing. Avato’s unification solutions are designed to facilitate a data ingestion pipeline for real-time information processing, ensuring that entities can access and analyze information as it streams in—essential for making informed choices in fast-paced environments like banking.

- Information Privacy and Security: With heightened concerns about information privacy, organizations are emphasizing secure information collection practices to ensure compliance with regulations. Avato’s unification solutions are architected for secure transactions, making them trusted by banks, healthcare, and government sectors. This focus on security is vital for maintaining trust and safeguarding sensitive information, particularly in regulated industries.

- Cloud-Based Solutions: The ongoing shift towards cloud computing is profoundly impacting information acquisition strategies. Avato provides cloud-based connectivity solutions that include a data ingestion pipeline, offering scalability and flexibility for entities of all sizes to adapt their information intake methods to meet evolving requirements.

As we approach 2025, these trends will continue to influence the landscape of information collection, equipping IT managers with the tools and insights necessary to navigate the complexities of contemporary information environments. The combination of AI and machine learning, especially generative AI, will be pivotal in enhancing information processing, ensuring that organizations can leverage their assets effectively. Furthermore, as Gustavo Estrada from BC Provincial Health Services Authority states, “Avato has streamlined complex projects and produced outcomes within expected time frames and budget limitations,” highlighting the effectiveness of cohesive solutions in addressing these trends.

Avato’s differentiation through speed, security, and simplicity in integration, supported by specific features such as 24/7 uptime and robust error handling, further underscores its relevance in the evolving data ingestion pipeline.

Conclusion

In today’s rapidly evolving digital landscape, the significance of effective data ingestion cannot be overstated. This article has explored the essential components of data ingestion, including best practices, common challenges, and emerging trends that IT managers must navigate to maintain a competitive edge. Establishing robust data ingestion pipelines is crucial, as these systems not only enhance data availability but also enable organizations to make informed decisions swiftly.

The discussion highlighted various data ingestion methods—batch, real-time, and hybrid—each tailored to specific business needs. Understanding the nuances of these approaches allows organizations to optimize their data processing strategies, ensuring that they can adapt to varying demands while maintaining high data quality. Furthermore, the importance of designing a resilient pipeline architecture and implementing rigorous data validation practices was emphasized, as these factors are pivotal to achieving operational efficiency and reliability.

As the landscape of data ingestion continues to evolve, embracing automation and integrating advanced technologies like AI and machine learning will be key to overcoming challenges and enhancing data quality. Organizations must also prioritize security and compliance, especially in regulated industries, to build trust and safeguard sensitive information.

By focusing on these critical areas, IT managers can effectively harness the power of data, driving innovation and strategic decision-making within their organizations. The future of data ingestion lies in adopting scalable, automated solutions that not only streamline processes but also empower businesses to thrive in an increasingly data-driven world.